Amazon is poised to roll out its newest artificial intelligence chips as the Big Tech group seeks returns on its multibillion-dollar semiconductor investments and to reduce its reliance on market leader Nvidia. Executives at Amazon’s cloud computing division are spending big on custom chips in the hopes of boosting the efficiency inside its dozens of data centres, ultimately bringing down its own costs as well as those of Amazon Web Services’ customers. The effort is spearheaded by Annapurna Labs, an Israeli chip start-up that Amazon acquired in early 2015 for $350mn, with operations in Austin. Annapurna’s latest work is expected to be showcased next month when Amazon announces widespread availability of Trainium 2, part of a line of AI chips aimed at training the largest models. Trainium 2 is already being tested by Anthropic — the OpenAI competitor that has secured $4bn in backing from Amazon — as well as Databricks, Deutsche Telekom and Japan’s Ricoh and Stockmark. AWS and Annapurna’s target is to take on Nvidia, one of the world’s most valuable companies thanks to its dominance of the AI processor market. “We want to be absolutely the best place to run Nvidia,” said Dave Brown, vice-president of compute and networking services at AWS. “But at the same time we think it’s healthy to have an alternative.” Amazon said Inferentia, another of its lines of specialist AI chips, was already 40 per cent cheaper to run for generating responses from AI models.

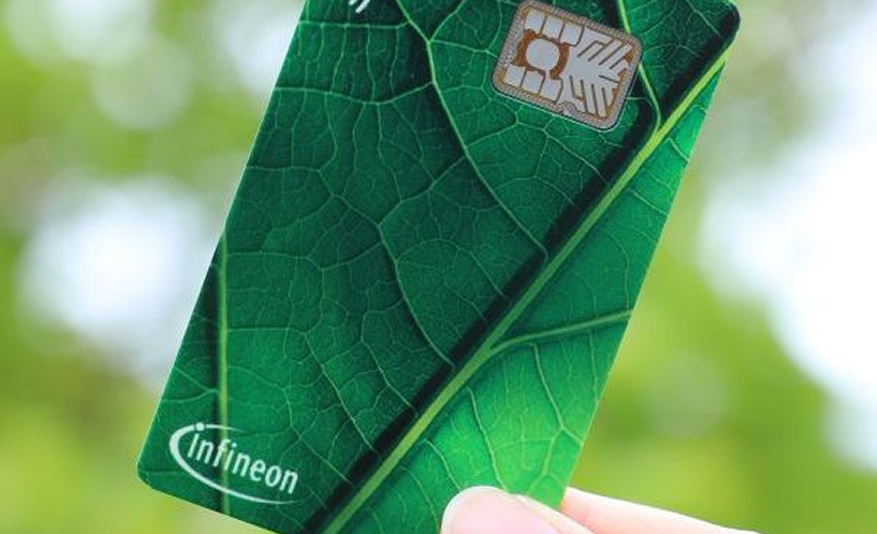

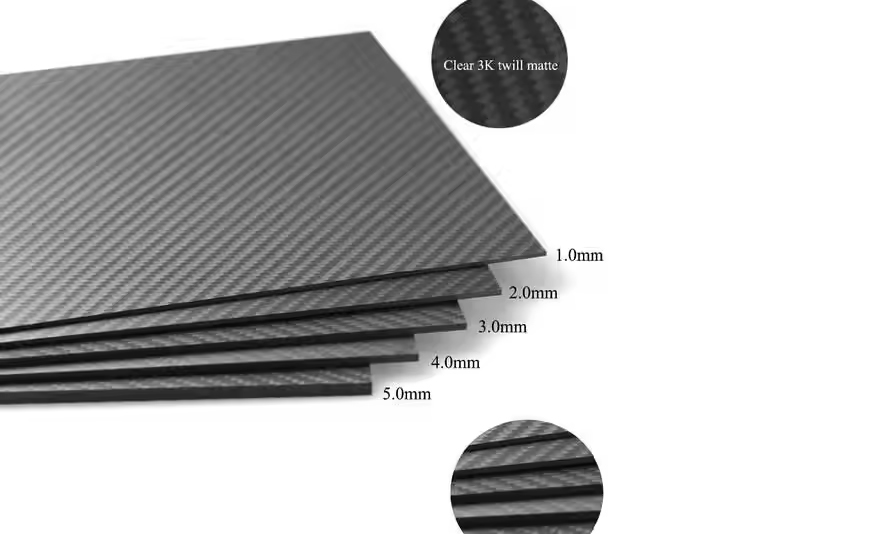

“The price [of cloud computing] tends to be much larger when it comes to machine learning and AI,” said Brown. “When you save 40 per cent of $1,000, it’s not really going to affect your choice. But when you are saving 40 per cent on tens of millions of dollars, it does.” Amazon now expects about $75bn in capital spending in 2024, with the majority on technology infrastructure. On the company’s latest earnings call, chief executive Andy Jassy said he expected the company would spend even more in 2025. This represents a surge on 2023, when it spent $48.4bn for the whole year. The biggest cloud providers, including Microsoft and Google, are all engaged in an AI spending spree that shows little sign of abating. Amazon, Microsoft and Meta are all big customers of Nvidia but are also designing their own data centre chips to lay the foundations for what they hope will be a wave of AI growth. “Every one of the big cloud providers is feverishly moving towards a more verticalised and, if possible, homogenised and integrated [chip technology] stack,” said Daniel Newman at The Futurum Group. “Everybody from OpenAI to Apple is looking to build their own chips,” noted Newman, as they sought “lower production cost, higher margins, greater availability and more control”. “It’s not [just] about the chip, it’s about the full system,” said Rami Sinno, Annapurna’s director of engineering and a veteran of SoftBank’s Arm and Intel. For Amazon’s AI infrastructure, that means building everything from the ground up, from the silicon wafer to the server racks they fit into, all of it underpinned by Amazon’s proprietary software and architecture. “It’s really hard to do what we do at scale. Not too many companies can,” said Sinno.

After starting out building a security chip for AWS called Nitro, Annapurna has since developed several generations of Graviton, its Arm-based central processing units that provide a low-power alternative to the traditional server workhorses provided by Intel or AMD. “The big advantage to AWS is their chips can use less power, and their data centres can perhaps be a little more efficient”, driving down costs, said G Dan Hutcheson, analyst at TechInsights. If Nvidia’s graphics processing units were powerful general purpose tools — in automotive terms, like a station wagon or estate car — Amazon could optimise its chips for specific tasks and services, like a compact or hatchback, he said. However, AWS and Annapurna have barely dented Nvidia’s dominance in AI infrastructure. Nvidia logged $26.3bn in revenue for AI data centre chip sales in its second fiscal quarter of 2024. That figure is the same as Amazon announced for its entire AWS division in its own second fiscal quarter — only a relatively small fraction of which can be attributed to customers running AI workloads on Annapurna’s infrastructure, according to Hutcheson. As for the raw performance of AWS chips compared with Nvidia’s, Amazon avoids making direct comparisons and does not submit its chips for independent performance benchmarks. “Benchmarks are good for that initial ‘hey, should I even consider this chip?’” said Patrick Moorhead, a chip consultant at Moor Insights & Strategy, but the real test was when they were put “in multiple racks put together as a fleet”. Moorhead said he was confident Amazon’s claims of a four-times performance increase between Trainium 1 and Trainium 2 were accurate, having scrutinised the company for years. But the performance figures may matter less than simply offering customers more choice. “People appreciate all of the innovation that Nvidia brought, but nobody is comfortable with Nvidia having 90 per cent market share,” he added. “This can’t last for long.” This article has been amended to clarify the origin and location of Annapurna Labs